The AI Agent Cheat Sheet: What You Need to Know

Go beyond basic bots: Your essential guide to the different flavors of AI agents transforming industries today.

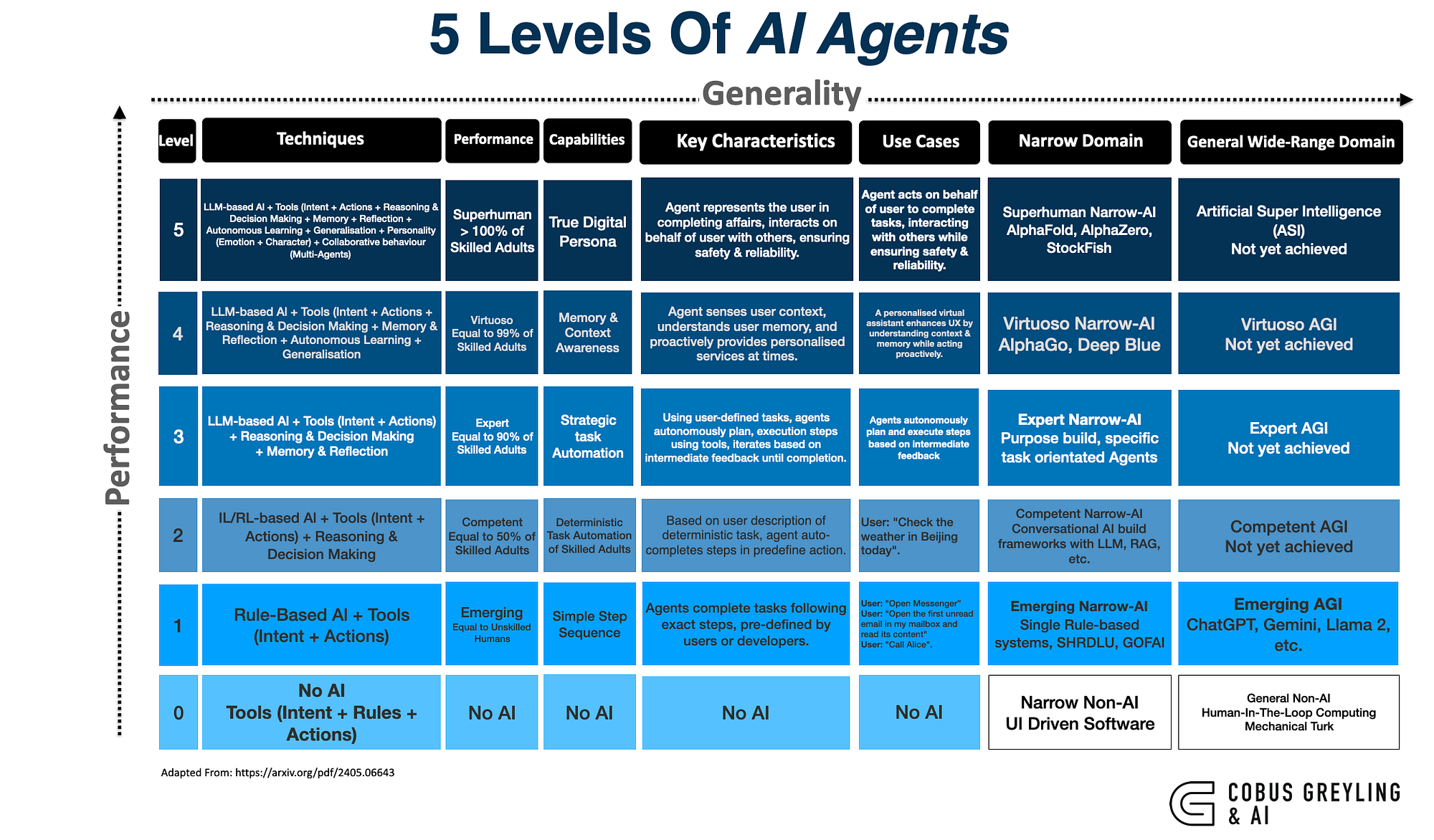

They're everywhere now. Silent. Working. Learning. AI agents have infiltrated our digital ecosystem with such subtlety that we barely notice their presence anymore. Yet they're making decisions that impact everything from what shows up in your social feed to how your company allocates resources.

I've spent the last decade watching these digital entities evolve from clunky rule-followers to sophisticated decision-makers that can outthink humans in specific domains. At 1985, our software development teams integrate with these agents daily. The landscape has changed dramatically.

This isn't just another tech evolution. It's a fundamental shift in how software operates in our world.

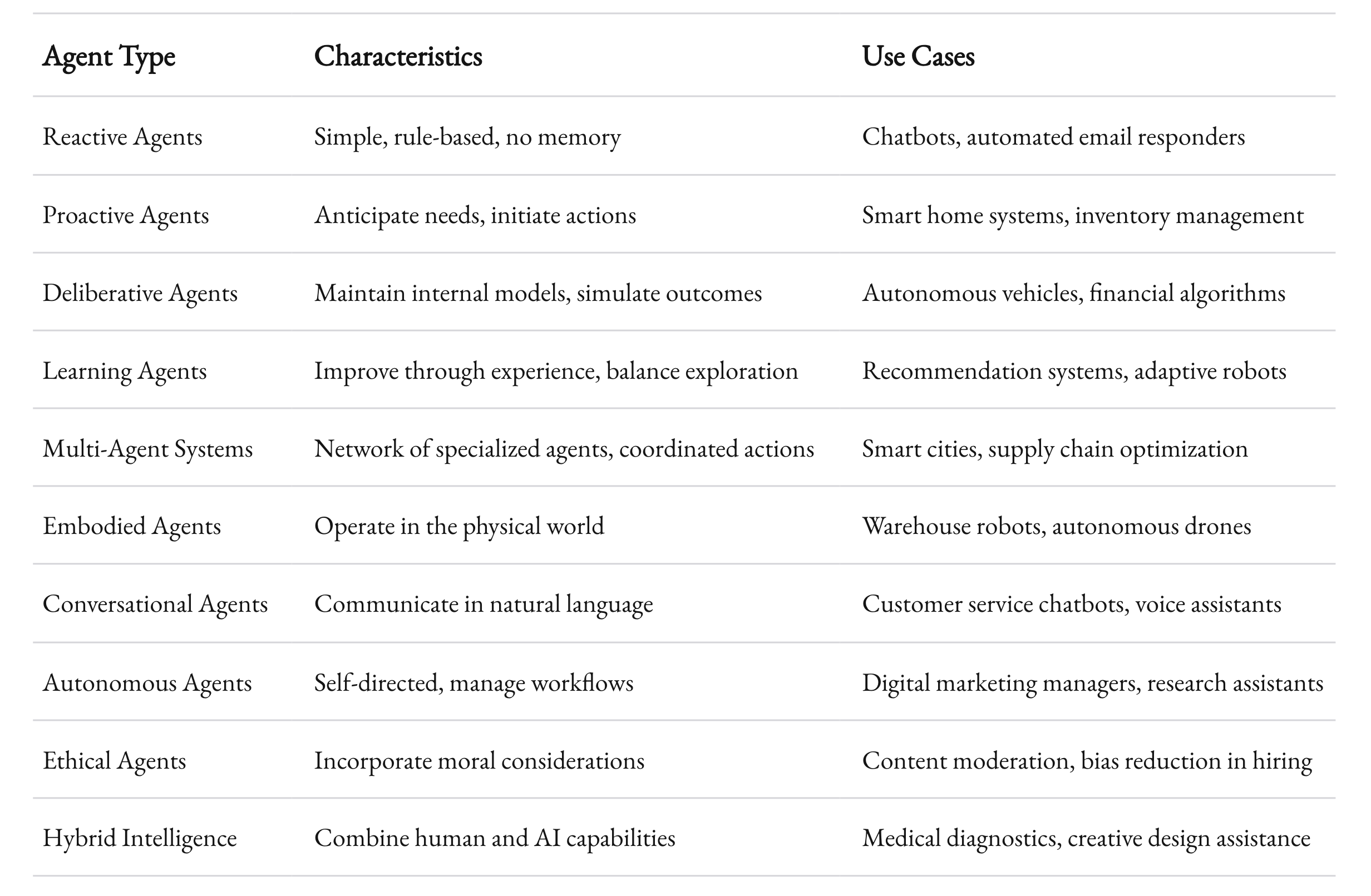

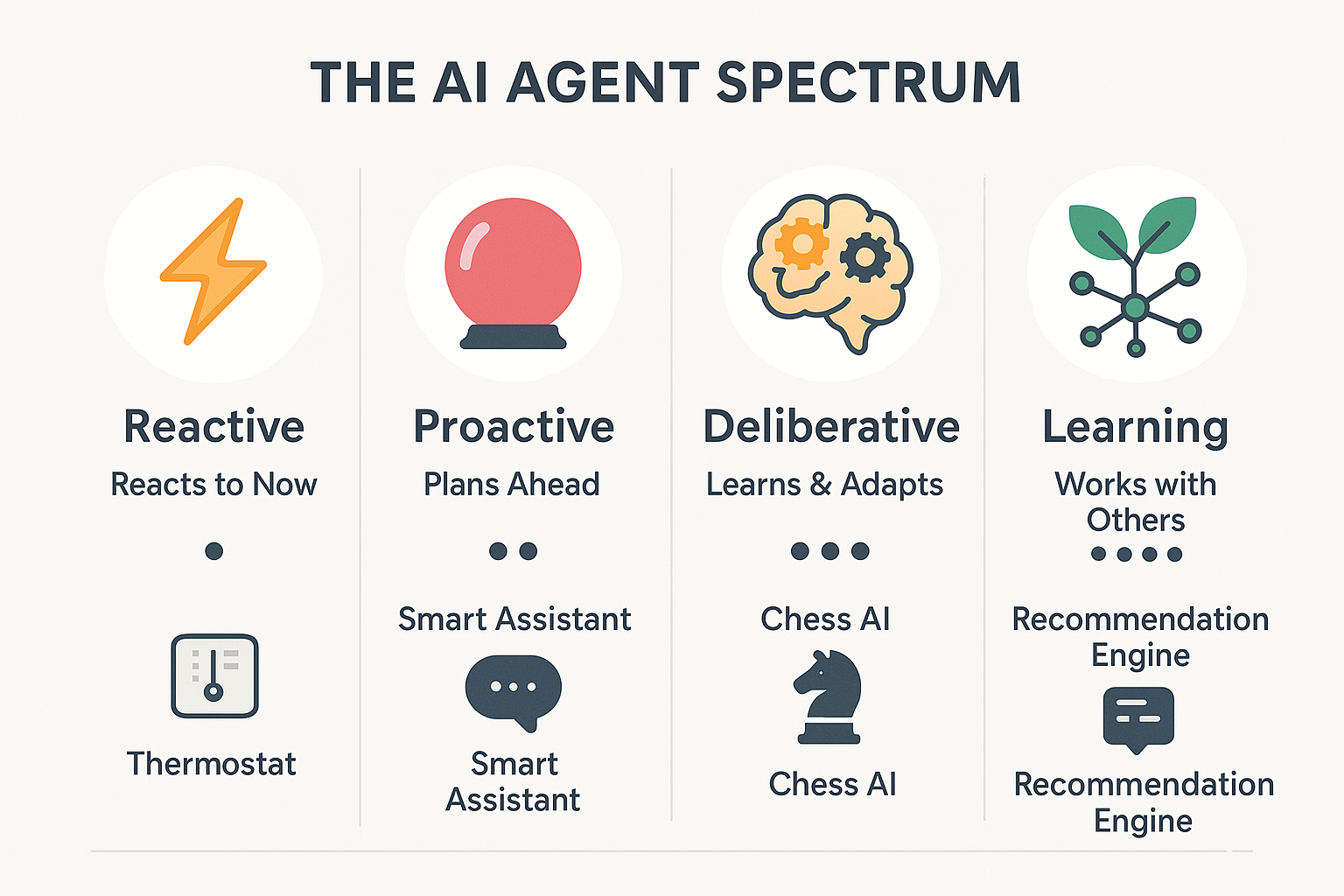

Reactive Agents: The Digital Reflexes

The simplest agents react. Nothing more. They perceive their environment and respond according to predefined rules. No memory. No planning. Just stimulus and response.

These reactive agents form the foundation of many systems we interact with daily. The thermostat that kicks on when temperature drops. The chatbot that responds to specific keywords. The automated email responder that acknowledges your customer service request.

What makes reactive agents powerful isn't their complexity—it's their reliability. They execute their function with perfect consistency, never deviating from their programming. This predictability makes them ideal for controlled environments where the rules are clear and exceptions are rare.

According to a 2023 Gartner report, 78% of customer service interactions still rely primarily on reactive agents for initial triage, despite advances in more sophisticated AI. The reason? They're cost-effective and reliable for handling routine tasks that don't require contextual understanding.

At 1985, we've implemented reactive agents for clients who need dependable, rule-based responses in mission-critical systems. One manufacturing client reduced error rates by 37% after implementing reactive agents to monitor production line anomalies. The agents couldn't explain the anomalies, but they could flag them instantly for human review.

Proactive Agents: Anticipating Needs Before They Arise

Unlike their reactive counterparts, proactive agents don't wait for stimuli. They initiate actions based on goals, predictions, and patterns they've identified.

The email assistant that suggests responses before you've finished reading the message. The inventory management system that orders new stock before you run out. The smart home that adjusts temperature before you arrive. These are all proactive agents at work.

What distinguishes truly effective proactive agents is their ability to balance initiative with restraint. An agent that constantly makes suggestions becomes noise. An agent that rarely offers help becomes useless. The sweet spot lies in understanding not just what the user might need, but when and how to offer assistance.

A McKinsey analysis from 2024 found that businesses implementing proactive AI agents in customer relationship management saw a 23% increase in customer retention compared to those using only reactive systems. The difference? Proactive systems identified potential issues before customers experienced them.

"The shift from reactive to proactive AI represents the difference between digital assistants and digital colleagues," notes Dr. Elaine Chen, AI Ethics researcher at MIT. "One waits for commands; the other participates in the work."

Deliberative Agents: The Digital Thinkers

When an agent needs to consider multiple possible futures before acting, we enter the realm of deliberative agents. These systems maintain internal models of their world, simulate potential outcomes, and select actions based on expected results.

The autonomous vehicle plotting a route through traffic. The financial algorithm evaluating market conditions before executing trades. The recommendation engine weighing your preferences against available options. All deliberative agents, considering possibilities before committing to action.

What makes deliberative agents particularly valuable is their ability to operate in complex, dynamic environments where simple rules don't suffice. They can adapt to changing conditions by revising their internal models and adjusting their strategies accordingly.

The computational cost of deliberation creates interesting trade-offs. More deliberation generally leads to better decisions but slower response times. In time-sensitive applications, finding the right balance between thinking and acting becomes crucial.

At 1985, we recently completed a project for a logistics company implementing deliberative agents to optimize routing. The system considers weather patterns, traffic data, delivery priorities, and fuel efficiency to plot optimal routes. The result? A 17% reduction in fuel costs and a 22% improvement in on-time deliveries.

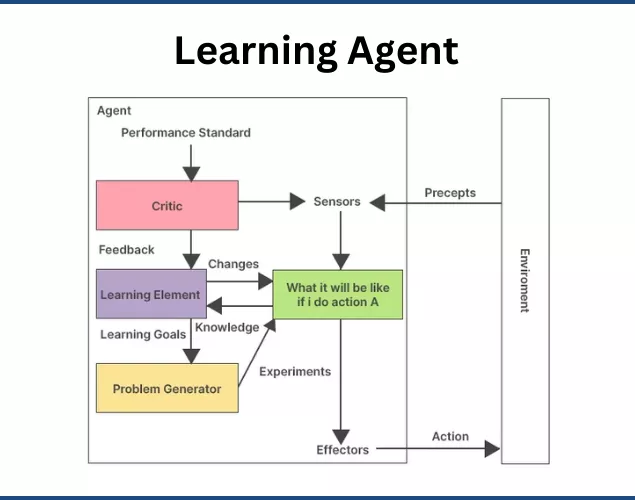

Learning Agents: Evolution Through Experience

Perhaps the most fascinating category, learning agents improve their performance over time through experience. They start with initial capabilities but continuously refine their models and strategies based on outcomes.

The recommendation system that gets better at suggesting products as you interact with it. The language model that adapts to your writing style. The manufacturing robot that improves its precision with each repetition. These agents don't just perform tasks—they get better at them.

What distinguishes effective learning agents is their ability to balance exploration (trying new approaches) with exploitation (leveraging what already works). Too much exploration leads to inconsistent performance. Too much exploitation prevents discovery of better methods.

According to OpenAI's 2024 industry report, systems employing learning agents show an average 34% improvement in task-specific performance metrics over their first six months of deployment, compared to static systems that show no significant improvement.

The implementation of learning agents requires careful consideration of feedback mechanisms. An agent can only learn from the feedback it receives, whether that's explicit (user ratings, corrections) or implicit (user engagement, task completion rates).

"The quality of a learning agent's improvement is directly proportional to the quality of feedback it receives," explains Dr. Rajesh Sharma, AI researcher at Stanford. "Garbage in, garbage out applies even more strongly to learning systems than traditional software."

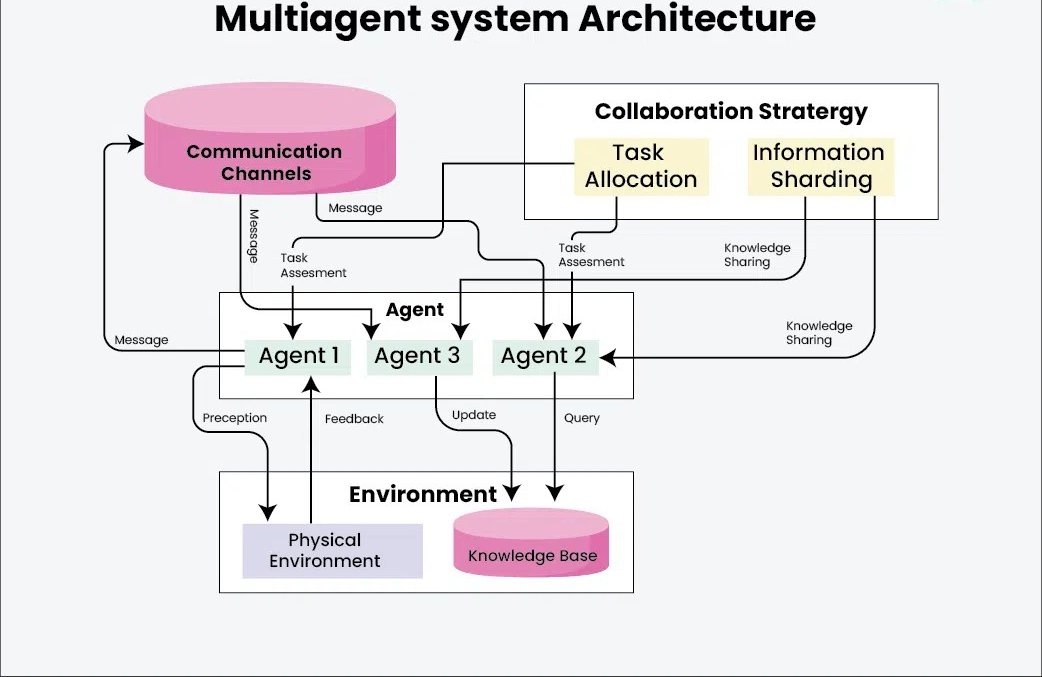

Multi-Agent Systems: The Digital Ecosystem

Individual agents are powerful, but networks of specialized agents working together can tackle problems of extraordinary complexity. These multi-agent systems distribute tasks among specialized components that communicate, coordinate, and sometimes compete to achieve objectives.

The smart city that balances traffic flow, energy usage, and public safety through interconnected systems. The financial market where trading algorithms interact to determine prices. The supply chain optimization platform where procurement, inventory, and logistics agents coordinate to maintain efficient operations.

What makes multi-agent systems particularly powerful is their ability to decompose complex problems into manageable components while maintaining coordination toward overall goals. This approach mirrors how human organizations function, with specialists handling different aspects of a shared mission.

A 2023 study by Deloitte found that enterprises implementing multi-agent AI architectures reported 41% higher ROI on their AI investments compared to those using monolithic approaches. The difference stems from improved scalability, maintainability, and adaptability.

At 1985, we've seen this firsthand. One of our enterprise clients transitioned from a monolithic AI system to a multi-agent architecture for their customer service platform. The new system uses specialized agents for sentiment analysis, knowledge retrieval, response generation, and quality assurance—all coordinating to handle customer inquiries. The result was a 28% improvement in first-contact resolution rates and a 45% reduction in escalations to human agents.

![Embodied AI: How do AI-powered robots perceive the world? [+video] | Qualcomm](https://www.qualcomm.com/content/dam/qcomm-martech/dm-assets/images/blog/ai-product/Embodied-AI-robots-learn-through-interaction-with-a-physical-environment.png)

Embodied Agents: AI in the Physical World

When AI moves beyond the digital realm into physical systems, we enter the domain of embodied agents. These entities perceive and act in the physical world, bridging the gap between digital intelligence and physical capability.

The warehouse robot navigating aisles to fulfill orders. The autonomous drone inspecting infrastructure. The surgical assistant helping doctors perform precise procedures. These embodied agents must contend not just with information but with the messy, unpredictable nature of physical reality.

What distinguishes effective embodied agents is their ability to handle the uncertainty and variability of the physical world. Digital environments are clean, deterministic, and fully observable. Physical environments are noisy, probabilistic, and partially observable.

According to Boston Consulting Group's 2024 robotics industry analysis, companies deploying embodied AI agents in manufacturing environments see an average 32% reduction in quality control issues compared to traditional automation. The key difference is adaptability—embodied agents can adjust to variations that would derail conventional automation.

"The challenge of embodied AI isn't just perception or action—it's the tight coupling between them," notes Dr. Melanie Mitchell, AI researcher and author. "An agent must understand how its actions affect what it will perceive next, creating a continuous feedback loop."

Conversational Agents: The Digital Communicators

Perhaps the most visible AI agents in our daily lives are conversational agents—systems designed to communicate with humans through natural language. These range from simple chatbots to sophisticated digital assistants capable of nuanced dialogue.

The customer service chatbot answering product questions. The voice assistant scheduling your appointments. The language model helping draft your emails. These agents interpret human language, extract meaning, and generate appropriate responses.

What distinguishes effective conversational agents is their ability to maintain context over extended interactions. Early chatbots treated each user message in isolation. Modern conversational agents track the flow of dialogue, remember previous exchanges, and maintain coherent conversations over time.

A 2024 survey by Forrester Research found that 67% of consumers now prefer interacting with well-designed conversational agents for routine customer service inquiries, up from just 38% in 2021. The key factors driving this shift were 24/7 availability, consistent information, and increasingly natural interactions.

At 1985, we've implemented specialized conversational agents for several clients in regulated industries like healthcare and finance. These systems must balance natural communication with strict compliance requirements. One healthcare client saw patient satisfaction scores increase by 22% after implementing a conversational agent for appointment scheduling and basic health questions, while reducing administrative staff workload by 34%.

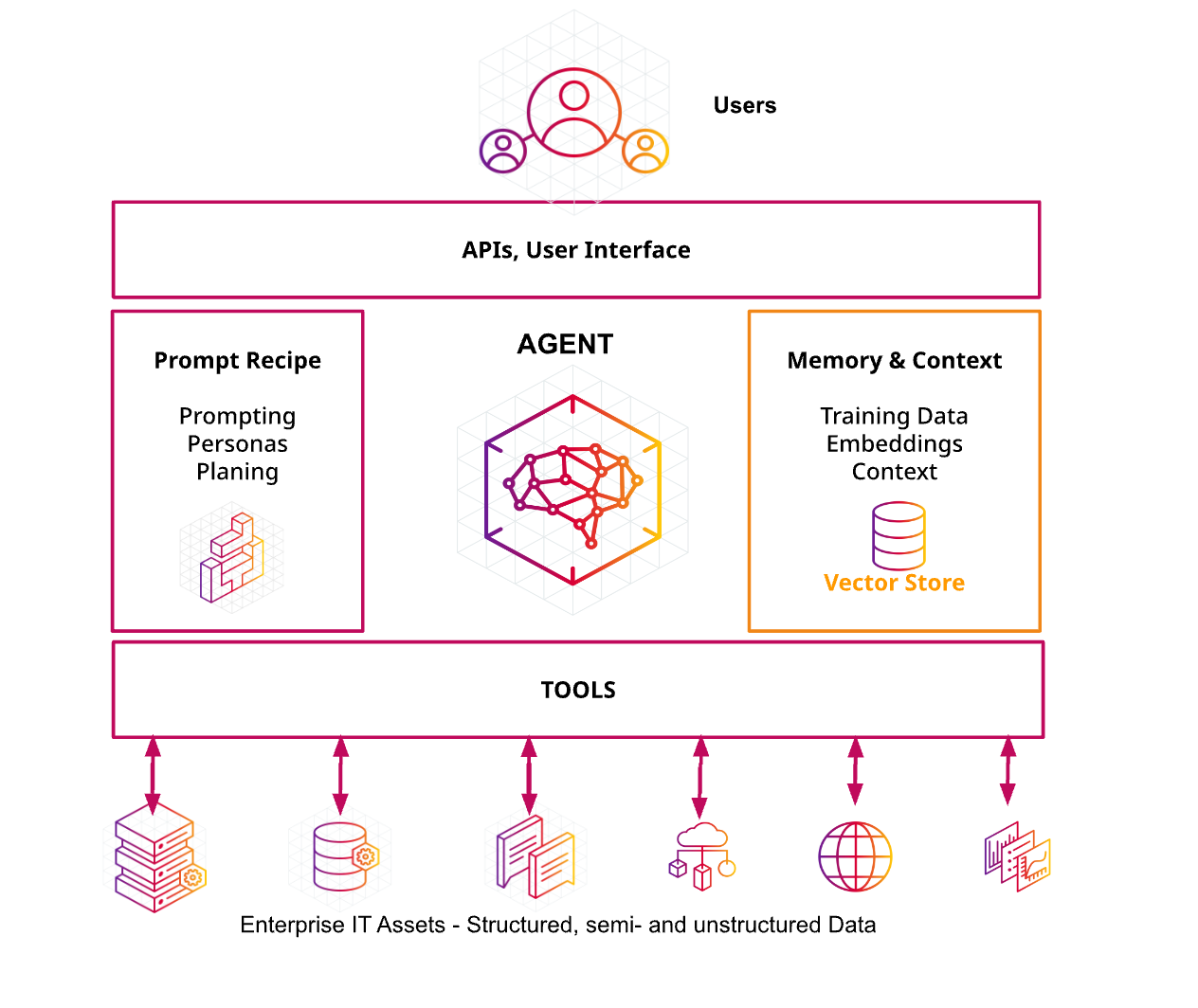

Autonomous Agents: The Self-Directed Digital Workers

The frontier of AI agency lies in autonomous agents—systems that can independently pursue complex goals with minimal human oversight. These agents combine elements from other categories but add a crucial capability: self-direction toward long-term objectives.

The AI researcher that scours scientific literature to identify promising research directions. The digital marketing manager that plans, executes, and optimizes campaigns. The software development assistant that writes, tests, and deploys code. These autonomous agents don't just perform tasks—they manage entire workflows.

What distinguishes truly autonomous agents is their ability to decompose high-level goals into actionable steps, monitor their own progress, and adapt their approach when faced with obstacles. They don't just follow instructions—they interpret intentions and find ways to fulfill them.

According to a 2024 MIT Technology Review survey, organizations implementing autonomous agent systems report an average 43% reduction in routine knowledge work hours, allowing human workers to focus on more creative and strategic tasks.

"The most powerful autonomous agents aren't those that replace humans, but those that amplify human capabilities by handling routine aspects of complex work," explains Dr. Fei-Fei Li, AI researcher and professor at Stanford. "They're less like robots and more like highly competent assistants."

Ethical Agents: The Moral Dimension of AI

As AI agents gain more autonomy and influence, the question of their ethical frameworks becomes increasingly important. Ethical agents incorporate moral considerations into their decision-making processes, weighing not just efficiency and effectiveness but also fairness, transparency, and human welfare.

The content moderation system that balances free expression with harm prevention. The hiring algorithm designed to reduce bias in candidate selection. The autonomous vehicle programmed to make split-second decisions that minimize harm in unavoidable accidents. These systems must navigate complex ethical terrain.

What distinguishes effective ethical agents is their ability to make their moral frameworks explicit and adjustable. Different contexts and cultures may require different ethical priorities, and systems that can adapt to these variations will be more widely accepted.

A 2023 study by the AI Ethics Institute found that companies implementing explicitly ethical AI frameworks experienced 29% fewer public relations incidents related to their AI systems and 47% higher user trust ratings compared to companies without such frameworks.

"The challenge isn't creating AI that's ethical in some abstract sense, but creating AI that aligns with the specific ethical frameworks of the communities it serves," notes Dr. Timnit Gebru, AI ethics researcher. "This requires ongoing dialogue between technologists and stakeholders."

The Future: Hybrid Intelligence

The most promising direction for AI agency isn't fully autonomous systems that replace humans, but hybrid intelligence that combines human and artificial capabilities. These collaborative systems leverage the complementary strengths of both types of intelligence.

The medical diagnostic system that partners with doctors to identify conditions. The creative assistant that collaborates with designers to explore possibilities. The decision support system that helps executives evaluate complex scenarios. These hybrid approaches recognize that humans and AI excel at different aspects of intelligence.

What distinguishes effective hybrid systems is their thoughtful division of labor between human and artificial agents. AI typically excels at pattern recognition, data processing, and consistency. Humans excel at contextual understanding, creative leaps, and ethical judgment.

According to a 2024 Harvard Business Review analysis, organizations implementing hybrid intelligence approaches report 37% higher innovation metrics and 42% better decision outcomes compared to those pursuing either fully automated or traditional human-only approaches.

At 1985, our most successful client implementations have followed this hybrid model. One financial services client implemented a hybrid intelligence system for fraud detection that reduced false positives by 63% while increasing actual fraud detection by 27%. The system uses AI agents to flag suspicious patterns and human analysts to investigate the most complex cases, with each side continuously learning from the other.

The Agency Revolution

The evolution of AI agents represents a fundamental shift in how we think about software. Traditional programs execute instructions. Agents pursue goals. This distinction may seem subtle, but its implications are profound.

As these digital entities become more capable, the nature of our relationship with technology is changing. We're moving from tools we use to partners we collaborate with. From systems we program to systems we guide. From software that serves specific functions to software that serves broader purposes.

The most successful organizations won't be those that simply deploy the most advanced agents, but those that thoughtfully integrate these agents into their human workflows. The goal isn't to replace human agency but to extend it—creating hybrid systems that leverage the unique strengths of both human and artificial intelligence.

At 1985, we're committed to helping our clients navigate this agency revolution. We believe the future belongs not to those who build the most powerful AI, but to those who build the most effective partnerships between human and artificial intelligence.

The agents are here. The question isn't whether they'll transform our world, but how we'll shape that transformation.